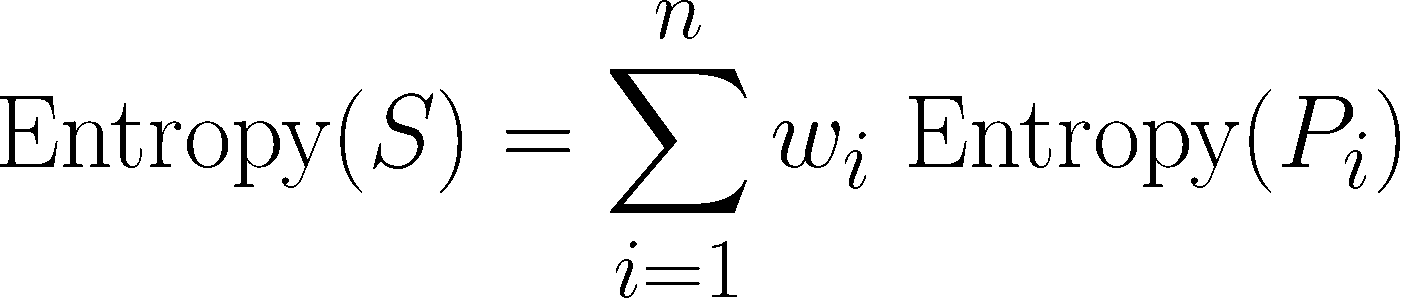

And people do use the values interchangeably. But will serve as a decent guideline for guessing what the entropy should be. 9 Answers Sorted by: 77 Gini impurity and Information Gain Entropy are pretty much the same. This type of rational does not always work (think of a scenario with hundreds of outcomes all dominated by one occurring \(99.999\%\) of the time). I’m going to show you how a decision tree algorithm would decide what attribute to split on first and what feature provides more information, or reduces more uncertainty about our target variable out of the two using the concepts of Entropy and Information Gain. We can redefine entropy as the expected number of bits one needs to communicate any result from a distribution. For example, if all members are positive (p+1), then p- is 0, and Entropy(S). In this formalism, a classification or regression decision tree is used as a predictive model to draw conclusions about a set of observations. Note that entropy is 0 if all the members of S belong to the same class. Decision tree learning is a supervised learning approach used in statistics, data mining and machine learning. As for which one to use, maybe consider Gini Index, because this way, we don’t need to compute the log, which can make it a bit computationly faster. Decision tree learning is a supervised learning approach used in statistics, data mining and machine learning. Motivated by the idea of Deng Entropy, it can measure the uncertain degree of Basic Belief Assignment (BBA) in terms of uncertain problems. The two formulas highly resemble one another, the primary difference between the two is \(x\) vs \(\log_2p(x)\). In practice, Gini Index and Entropy typically yield very similar results and it is often not worth spending much time on evaluating decision tree models using different impurity criteria. If instead I used a coin for which both sides were tails you could predict the outcome correctly \(100\%\) of the time.Įntropy helps us quantify how uncertain we are of an outcome. For example if I asked you to predict the outcome of a regular fair coin, you have a \(50\%\) chance of being correct. The higher the entropy the more unpredictable the outcome is. Essentially how uncertain are we of the value drawn from some distribution. Quantifying Randomness: Entropy, Information Gain and Decision Trees EntropyĮntropy is a measure of expected “surprise”.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed